The European Organization for Nuclear Research’s Globe for Science and Innovation in Meyrin, Switzerland. Photograph: Michael Jungblut for CERN

Editors’ Note: This is the final piece in an eight-part series on the search for new physics at CERN.

This series began in March by telling you about a curious anomaly—a “diphoton excess at 750 GeV”—found in two experiments at the Large Hadron Collider (LHC) last year and announced last December. To understand all this, we’ve journeyed through a wide expanse of contemporary particle physics: quantum field theory and the Standard Model, the construction and operation of the LHC, and the story of the discovery of the Higgs boson. It is time we return to the beginning.

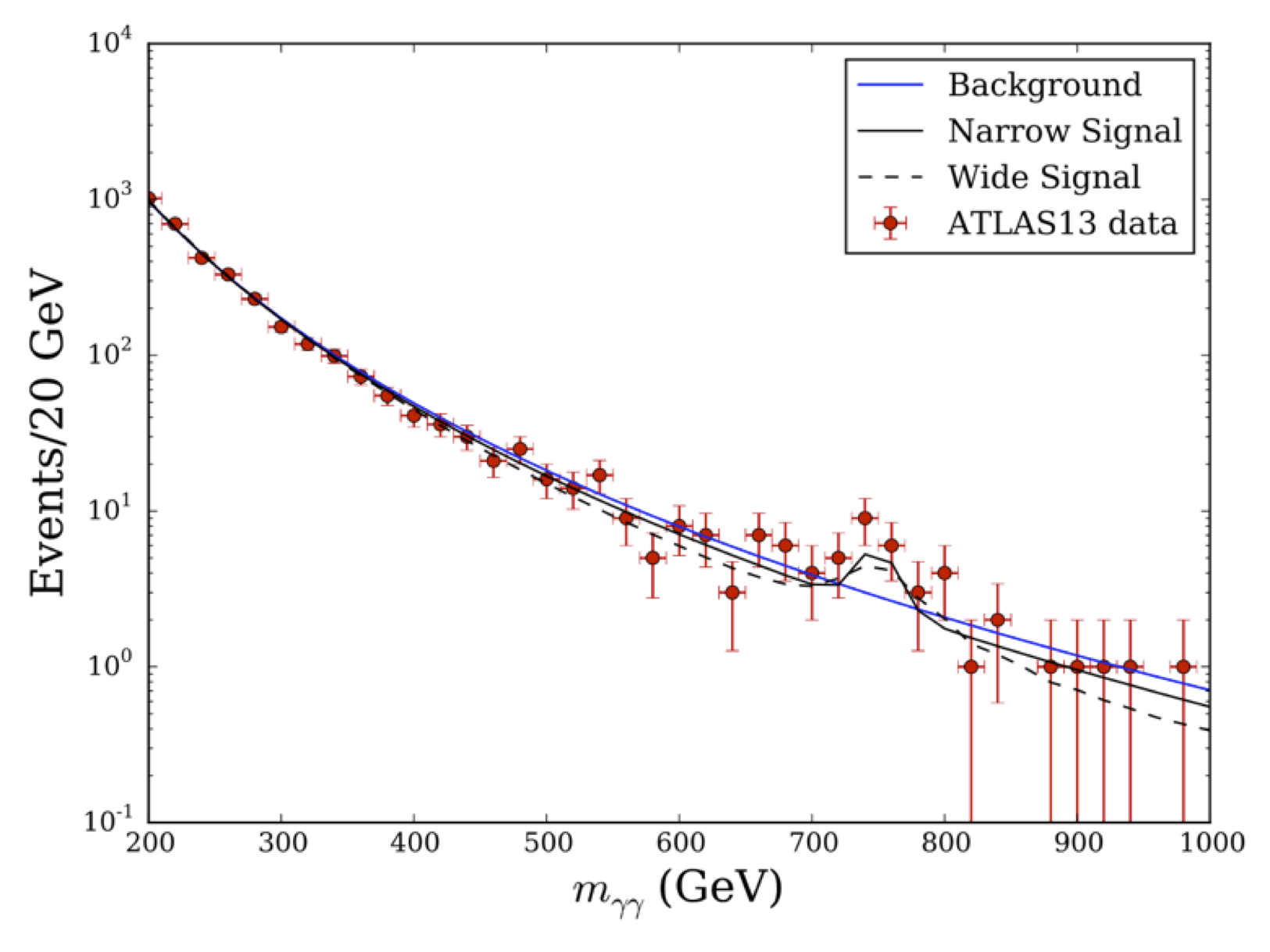

So let me remind you about the anomaly that caused such commotion. Last year, during the LHC’s second run at a higher energy, the ATLAS and CMS detectors picked up an unusually large number of pairs of high-energy photons as a result a small number of extra proton-proton collisions—no more than two dozen or so such events in all. The energy and momentum of the pair of photons summed to an “invariant mass” of 750 GeV. What made these diphoton events special was that there were more of them than we would expect to see based on the Standard Model. (Hence, a diphoton excess.) Graphically, this anomaly showed up as a bump—yes, that is the technical word we use—in a falling spectrum of the number of diphoton events predicted by the Standard Model. The image below depicts the Standard Model predictions as a background curve, in blue, while the 2015 ATLAS data are shown in black. (The CMS data look fairly similar.)

ATLAS data from 2015, showing a clear bump at 750 GeV. Image: CERN

What exactly are we to make of the very clear bump at 750 GeV? (Actually, since the CMS data look similar, there were really two bumps, appearing in two different experiments.) That is the question that hundreds of particle physicists around the world rushed to answer after this data was announced last December. Since then, more than 350 papers have appeared on the arXiv—an online repository of math and physics research—with “diphoton” or “750” in the title. (The actual count of relevant papers is likely over 500.) The community’s response to these findings has been truly electric.

As you can see in the images, the Standard Model does predict some diphoton events at 750 GeV, though a very small number. (Note that the vertical axis is logarithmic.) The ATLAS data deviate sharply from the blue trend (and the CMS data somewhat less so)—but that in itself does not matter. What matters is whether the bumps are statistically significant. In other words, what matters is whether the bumps are so big that that their presence cannot be explained by random statistical fluctuation around the background Standard Model prediction.

Consider the following analogy. You are going to toss a fair coin—that is, a coin that is evenly weighted so it will land face up and face down with equal probability. If you toss this fair coin thirty times, you don’t expect to get exactly fifteen heads. At least, you would not be too surprised if the number of heads turned out to be sixteen, and you might even be a little surprised if you managed to get exactly fifteen. Some basic statistics shows that for this experiment, the average size of the fluctuations around the mean is around three. (For those in the know, I’m referring to the standard deviation.) That means that it would be fairly typical for you to get anywhere between twelve and eighteen heads.

If the findings really did point to new physics, it would be the biggest news since the Higgs boson.

So if you repeat this little experiment over and over, tossing a fair coin thirty times again and again, quite often you’ll observe a slight excess or bump of eighteen heads. (We would call this 1-sigma significance, since the deviation from fifteen is the same size as the expected fluctuation.) Much less often, you’d get twenty-one heads. (We’d call this a 2-sigma fluctuation, since the deviation from fifteen is about twice the expected fluctuation.) But only very, very rarely—roughly once every 3.5 million experiments—would you observe a huge bump of twenty-nine heads. (We’d say this event was a 5-sigma occurrence, since the deviation from fifteen is about five times the expected fluctuation.) Now turn this reasoning around and imagine a slightly different experiment: again you toss a coin thirty times, but this time you don’t know in advance whether the coin is fair. If, in a single set of thirty flips of this coin, you got twenty-nine heads, you would have pretty strong evidence for concluding that the coin isn’t fair. (The alternative would be to say that the coin is fair, but you were extraordinarily lucky to observe this once-in-a-3.5-million occurrence.)

We physicists had to think about a similar problem in the case of the diphoton bump. Were the data like getting eighteen or maybe even twenty-one heads in thirty tosses of a coin? Or were they more like getting twenty-nine? In other words, was this bump—this upward fluctuation—within the range of what could reasonably be expected on the basis of the Standard Model, or was this evidence of something new? In the latter case, we would have pretty strong evidence to think that the bump was produced by some mechanism beyond the Standard Model—say, the decay of some new particle—just like in the coin-tossing experiment we would have pretty strong evidence to conclude that our coin wasn’t fair.

This prospect—possibly having found the first evidence of physics beyond the Standard Model—got everyone very excited. If the findings really did point to new physics, it would be the biggest news since the Higgs boson. Bigger than the Higgs, in fact. The Higgs boson was kind of a known unknown. It took us a long time—four decades—to find it, but we’d known about the Higgs mechanism since the 1960s, and everyone expected the associated boson to be found once we had a sufficiently powerful particle accelerator. We did expect to find some new physics at the LHC, but no one expected to find a diphoton excess like this one, in particular, and if it really did point to a new particle, no one had any idea how to reconcile it with what we expected to lie beyond the Standard Model. Nor has anyone worked out a completely satisfactory solution since the data were announced, as the hundreds of papers on the topic have fairly conclusively demonstrated (at least to my mind). If the diphoton data were significant, we would be facing the complete unknown.

• • •

So were the data significant, or weren’t they? My own professional contribution to the study of this anomaly was to analyze the combination of the ATLAS and CMS data. In my original calculations—which were, admittedly, somewhat crude (as I’m a theoretical physicist and thus lack some technical knowledge of the experimental techniques involved)—I found that, assuming no new physics beyond the Standard Model, the odds of both ATLAS and CMS seeing the observed number of excess diphoton events at 750 GeV was something like 1 in 300 (about a 4-sigma excess). But these calculations applied only to excesses at this particular mass. Adjusting the calculation to account for the fact that we would be equally excited to see an excess anywhere in the invariant mass spectrum (the look-elsewhere effect), I found that a conservative estimate of the probability of seeing these bumps, assuming no new physics beyond the Standard Model, was around 1 in 20 (a 2-sigma excess).

But 1 in 20 is not nearly enough evidence to claim discovery in particle physics. For that we require 1 in 3.5 million (5-sigma evidence). To see whether the bumps could pass this much higher standard of significance, we would need to collect more data.

To see why, go back to our coin flip analogy. Suppose you toss your coin thirty times and get twenty heads. Is the coin fair? Well, obviously it is not completely unfair—you didn’t get heads every time. But maybe it is just a little unfair, just unbalanced enough so that instead of having a 50 percent chance of heads each flip, it has a 60 or 70 percent preference for heads. Unfortunately, when you flip only thirty times, you don’t really have enough information to tell these options apart—at least not with any great statistical power. So what do you do? You collect more data: you keep flipping the coin. If the coin is truly fair, then over a large enough set of coin flips, you will see the results tend to even out to a 50/50 split. The more times you flip the coin, the greater the certainty you can have in your conclusion.

Now, a 1 in 20 finding was enough to get theorists excited, to get our minds working and the papers flowing, but everyone knew that the real test was yet to come. In May, the LHC was turned back on and began collecting data again on proton collisions at 13 TeV of energy.

And oh, how it collected data.

The unit we use to describe the amount of data that the LHC has collected is called an inverse femtobarn, denoted fb-1. It’s a strange unit, and I’m not going to go into detail about what it means. Just know that more inverse femtobarns mean more data. The dataset that sealed the Higgs discovery in 2012 was 20 fb-1 in ATLAS and 20 fb-1 in CMS. This was data collected at 8 TeV of collision energy.

The 2015 data containing the diphoton anomaly was only 3.2 fb-1 for ATLAS and 2.7 fb-1 for CMS. Though this was less data than had been collected during the Higgs search, it was data of collisions at higher energy (13 TeV instead of 8 TeV), so it was not impossible that new things might be found in it. That is, after all, the point of going to higher collision energies: it makes it easier for the quantum fields in the protons to excite undiscovered new quantum fields and shake some new particles loose. It took the LHC eight months in 2012 to collect 20 fb-1. In the three months since the LHC turned back on this summer, 17 fb-1 have been delivered to each experiment, again at much higher energy than ever before—though only about 12–13 fb-1 are ready for analysis. These numbers are frankly astounding, and they blow me away every time I look at the plots keeping a running tally of the data collected by the LHC.

So, both ATLAS and CMS now have completely new diphoton datasets that are about four times as large as their 2015 datasets—and they are rapidly growing as we speak. This factor of four is very important. It increases the resolving power of our statistical calculations. More specifically, it means that the same process should yield data twice as significant. Why?

Well, the Standard Model predicts that a certain percentage of ATLAS or CMS collisions will yield diphoton events. Therefore, if we run four times as many collisions at the LHC, we would expect to see four times as many diphoton events. By similar reasoning, our prediction for the true number of diphoton events—assuming new physics beyond the Standard Model—should quadruple. Therefore, the size of the bump itself—the excess number of diphoton events over the Standard Model prediction—should quadruple. But—and here’s the important point—freshman statistics tells us that when the amount of data is quadrupled, the average size of the random background fluctuations only doubles. (That’s because these fluctuations are proportional to the square root of the number of events.) Now, we always measure the significance of a bump relative to the average size of the background fluctuations. Putting all this together, we see that, relative to the average size of the new fluctuations, the new bump will look twice as significant as the bump we got last time. By this (somewhat naive) reasoning, a signal that was at 2-sigma significance before should increase to 4-sigma significance—not quite enough to warrant a discovery claim, but more than enough to take what was already an immense level of excitement and raise it to an unimaginable fever pitch.

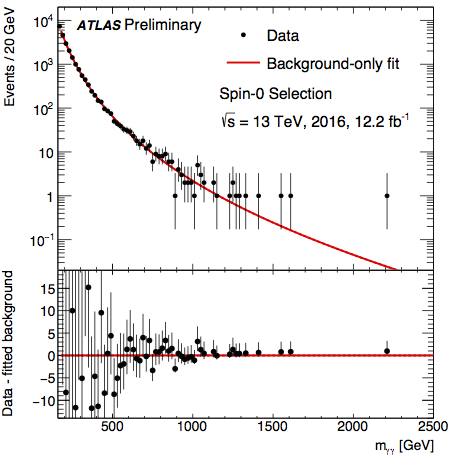

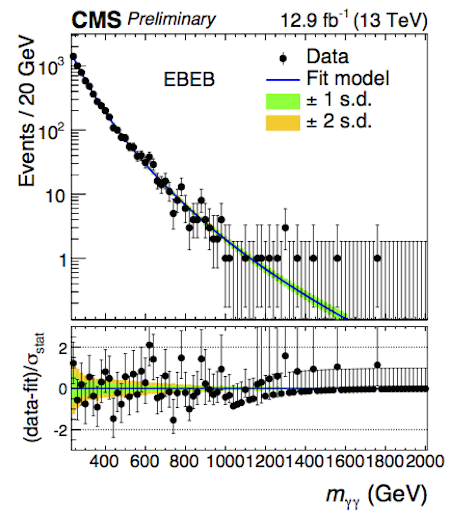

So that was the situation as ATLAS and CMS prepared to release their new results last week at the 38th International Conference for High Energy Physics (ICHEP) in Chicago. And, first thing Friday morning, they did release their results. These are the diphoton events they saw:

ATLAS and CMS data from 2016: the bumps at 750 GeV have disappeared. Image: CERN

And here is a plot showing the statistical significance of the 2015 (blue) and 2016 (red) ATLAS data; notice the red data are less than 1-sigma at 750 GeV:

The 2016 ATLAS data are no longer statistically significant. Image: CERN

There are wiggles in the data. But critically, at 750 GeV, there is no huge bump of extra events. The anomaly disappeared without a trace. With that, it was over.

What happened? In short: statistics happened. No one made any mistakes; the experimentalists performed their jobs flawlessly. The 2015 bumps were “real” in the sense that there were really more pairs of photons at 750 GeV than the average predicted by the Standard Model. Those excess diphotons really were collected by the ATLAS and CMS detectors, and they really were analyzed correctly. What happened is what must, occasionally, happen: an upward fluctuation in a randomly distributed process.

This has happened before: I described the vagaries of the decades-long search for the Higgs boson, in which numerous times, 2- and 3- sigma evidence for a new particle simply evaporated under the scrutiny of new data. It will certainly happen again. This is why particle physics demands such a punishingly high level of statistical certainly before we can claim discovery: so that we don’t embarrass ourselves by having to retract a new particle when more evidence comes in.

Though this high standard only protects us from statistical errors, there can also be systematic errors, when we fail to fully understand our experimental apparatus or background theories. Such systematic errors are far more pernicious: they do not necessarily disappear with more data. Rather, we correct them only after we come to a better understanding of the source of the error.

This is the first time I’ve lived through the statistical dissolution of an anomaly in physics, but in my (relatively short) time in this field I have seen at least five similar anomalies (both in collider physics and my other field of research, particle astrophysics) disappear due to systematic errors of one kind or another (though these anomalies were never claimed as unambiguous discoveries). While you do get a bit more jaded with experience, seeing new physics snatched away always leaves more than a little sting.

• • •

So, if the experimentalists did their job perfectly, and reported their results along with the errors and statistical uncertainties exactly as they should, did the theorists make a mistake in pouring so much effort into something that was “only” 2 sigma? This is a more difficult question. My personal feeling is no, not really. This attitude puts me in opposition to a number of my experimental colleagues, a nontrivial number of whom tend to say: “We told you it was only 2 sigma, and it would likely go away. It went away. Why didn’t you stay calm and wait for more data?”

I can only respond that trying to get theorists to sit quietly and not ponder hypotheticals would be like trying to herd cats. When things are working as they should, physicists have the freedom to explore the ideas they find interesting; this is true for both theorists and experimentalists. But given the complexity of the experiments these days, the experimentalists have to operate under more constraints: no one is allowed to just take the ATLAS detector for a spin to test their pet theory since so many people have a stake in its construction and operation. Theorists, on the other hand, work with paper, pencils, blackboards, and some modest computing resources. If we want to go down a rabbit hole that may not pan out, we have no one to answer to but ourselves—keeping in mind the opinion of our peers, who are the people responsible for hiring or tenure decisions. So when something odd comes along, some theorists are going to follow their noses and look into it. In the case of the diphoton anomaly, the barrier to entry was relatively low: the anomaly was in a relatively simple class of events, so even people who were not as deeply involved in LHC theory could throw their hat into the ring.

Trying to get theorists to avoid hypotheticals is like trying to herd cats.

Did we need all 350+ papers on this topic? Probably not. But we did get interesting ideas from some of them: concrete predictions were made, tests of various ideas were proposed, and possible connections to other theoretical ideas were put forward. You can’t know ahead of time who is going to write the interesting papers. And like herding cats, you can’t stop physicists from writing these papers anyway, so don’t bother. In the end, these flights of theoretical fancy will be called to account when more data comes to light. In this case, that took only six months.

One thing I always say, in the aftermath of these anomalies, is that we theorists should judge them by whether the rush of papers “explaining” the odd results reveal some interesting, yet unexplored area of theoretical physics.

Let me give you an example. In 2008, there was another anomaly; a satellite observatory called PAMELA saw an unexplained excess of positrons at very high energies throughout the Milky Way. Theorists got very excited: perhaps these positrons were evidence of dark matter annihilating right in front of our eyes. (Well, not right in front, since the positrons in question were coming from up to several thousand light-years away.)

But that idea was difficult to fit in to the mainstream understanding of dark matter. Some of the numbers just didn’t add up. It was soon realized, though, that you could get the idea to work, and work beautifully, if dark matter had some plausible properties that we just had never thought much about before. This oversight had occurred for no real reason; the possibility had just been overlooked in favor of the standard story about dark matter.

Now, it turned out that the PAMELA anomaly is almost certainly the result of a nearby pulsar injecting positrons into our corner of spacetime. We weren’t seeing the annihilation of dark matter; we were seeing antimatter being produced by the magnetic field of the corpse of a dead star. (The thing I love about physics is that even the disappointing answers are incredibly awesome.) However, the theoretical avenues that our response to the PAMELA anomaly opened are still here with us, and still interesting.

Anomalies can get us out of our ruts and force us to think about new ideas. That doesn’t happen for all anomalies of course, and whether it will happen with the diphoton anomaly remains to be seen. Certainly I have been thinking about some new directions that could have explained the anomaly. They were research paths I wouldn’t necessarily have gone down without this anomaly for motivation. Whether these paths remain theoretically interesting in the absence of the anomaly is something only time will tell.

• • •

So where does the disappearance of the diphotons leave particle physics?

In one sense, exactly where we were before. The hypothetical particle was an unexpected beast: we could not fit it into any of our pre-existing ideas. The most popular candidate for new physics, supersymmetry, could not accommodate it (or at least, the minimal version of supersymmetry we theorists spend the most time thinking about). That didn’t make the anomaly wrong (only more data did that), nor did it make it less welcome. In fact, part of what I found appealing about the anomaly was that it was so unusual and unexpected.

My personal opinion prior to the anomaly was that we don’t yet have the right theory of physics beyond the Standard Model, and that we needed some new discovery to point the way. Well, here was something completely unlike any of our pre-existing notations of what form new physics would take, which made me happy.

But, now that the anomaly has disappeared, the lack of a new particle at 750 GeV puts no well-motivated ideas about new physics in any peril—none called for it, and so none will be in danger without it. By contrast, the absence of a Higgs boson would have called immense swaths of our understanding of the Universe into question.

In another sense, the disappearance of our latest anomaly heightens a sense of unease among particle physicists, especially theoretical physicists.

We have solid theoretical reasons to expect that new physics does exist—though in what form we cannot say with any certainty—and that the new physics exists at an energy scale that can be probed at the LHC. Those reasons have to do with proposed solutions to the Hierarchy or Naturalness Problems, which I wrote about last time. Given that the energy scale of the Higgs mechanism is so much lower than the energy scale of gravity, we suspect that there must be something new at a few TeV of energy which explains the existence of these two widely separated energy scales.

Serious despair over the lack of positive results is premature.

But these arguments suggested that new physics would be visible at the LHC already. The other major results so far from ICHEP have been limits on hypothesized particles invented to explain the Hierarchy Problem. In particular, we now know that any partners of gluons and quarks (such as those proposed by supersymmetry) must be heavier than 1.1–1.8 TeV (depending on the model). The higher these mass scales get pushed, the less plausible our solutions to the Hierarchy Problem become. We are already reaching a point where many of our cherished models, developed in the decades following the theoretical construction of the Higgs mechanism and before the discovery of the Higgs boson, seriously conflict with the data. If those models are to survive, discovery of a new particle “must” happen soon.

I mentioned above that the expected size of a signal of new physics increases as the number of particle collision events, while the size of the background fluctuations increase only as the square root of the number of events. What this means in practice is that the largest “jump” in our sensitivity comes from the first significant chunk of data at a new experiment. After that initial data set is analyzed, if nothing is found, the limits creep up much more slowly, as the square root of the amount of data collected. So, if nothing is found in the early LHC data, we face a long road to collect enough information to push the limits higher.

Thus, there is a sense of concern amongst theorists. What if all these ideas we had were wrong? What if the Higgs is it? The diphoton anomaly was a tantalizing hint that we were on the right track (even if it was the epitome of a “who ordered that?” moment), and then it was gone.

To some degree, I share these concerns. We do need new positive results to tell us what the new physics really is, and I worry that we haven’t yet gotten them. However, I think that serious despair over the lack of positive results is premature. The LHC has collected a lot of data, yes, but not all of it has been analyzed, and we have not yet hit the point where we become “statistics limited.” That will not happen for years: eventually we will face statistical limitations, but the LHC is capable of delivering an immense amount of data over the next decade and beyond, so even when we hit that statistical limitation, the data set we will be “limited” to will be enormous.

• • •

Though the diphoton results were the most hotly anticipated result at ICHEP, they are far from the only ones. There is a firehose of results from ATLAS and CMS right now, and it will take time to understand them. Though there are no clear signs of new physics, there are (as always) a few anomalies and discrepancies—though none as big as the diphoton anomaly. Most of these will evaporate as the diphoton excess did, but who knows, one might not. Unfortunately, many of these little wiggles in the data appear in very complicated analyses, which lack a clear “propaganda plot” of the sort that the diphotons had, one with a clear bump that you can point at and say “see: here is something new.” Only time, and hard work, will tell us everything that the data contains. Plus, the results at ICHEP are not the only analyses of LHC data to mine for new physics. Over the next months, more will be released, and they too must be digested and understood. The smart money, as always, is on the Standard Model being able to explain the data. But maybe not. And the only way to know for sure is to go and look.

The LHC is an amazing machine, built over decades by tens of thousands of people. It has already accomplished great things, but it is not done yet. The latest results are not the beginning of the LHC’s efforts, or their end. They are, perhaps, the end of the beginning: we have the most powerful collider in the world run by exceptional physicists, operating at the peak of its capabilities, delivering data at an unparalleled rate. Though the disappearance of a suggestive hint of new physics is always a difficult moment, I still strongly believe that new physics remains to be found, and the LHC remains one of the best places to look.

But it is not the only place. There are other experiments all around the world, of varying sizes and techniques, looking for unexplained behavior in neutrinos, nuclear decays, high intensity beams of electrons or photons, for example. In addition, the astrophysics community continues to make amazing discoveries about the history of the Universe, which have direct impact on the study of particle physics. (This is, after all, how we learned about dark matter.)

I’m sure many of you who were following along from the beginning are a bit disappointed in this result. I am too. But I hope at least to have given you a better understanding of the quantum world, the particles that inhabit it, and why physicists are willing to go to such lengths to better understand it. Though this series has now come to an end, the story of particle physics goes on, and I hope you’ll continue to follow along as we continue our work.