“Whatever there is of value in America Whitman has expressed, and there is nothing more to be said. The future belongs to the machine, the robots.” —Henry Miller

In June 2011, Guernica, an online magazine that I helped to found, received an ominous letter from Google.

“During a recent review of your account, we found that you are currently displaying Google ads in a manner that is not compliant with our program policies,” the letter read. Google cited an essay Guernica published in February of that year, Clancy Martin’s “Early Sexual Experiences.” We were given just three working days to change our site or else—the threat was clear—we could lose the income we were making from Google’s AdSense program, which served advertisements on our pages.

We stood by our violation, and our author. In the process we learned the workings of GoogleSpeak, how it can be used to stand arbitrarily with government despots, and how it serves as the Web’s unofficial censor.

Born in the immediate aftermath of the Iraq war, Guernica is an engaged literary magazine. Politics and art, we believe, are never separate but part of the same cultural cloth. This became particularly clear as the U.S. media collectively failed to stand up to the Bush administration over its shifting rationale for the Iraq war. From the outset we covered feminism and sexual politics in what we have always taken to be a thoughtful, if frequently provocative, way.

The piece that ran us afoul of Google, our multinational revenue partner, was part of an issue themed around innovative memoir. We brought in a guest editor, Deb Olin Unferth, to select memoirs veering beyond convention or confession. An acclaimed novelist and memoirist, Unferth sought writers who “explore memoir in a decidedly contemporary manner, while at the same time showing an understanding of the past tradition.” She described “Early Sexual Experiences” as a story in which the author “brings us—and himself—to a dangerous emotional place with a reckless swagger that again and again breaks down into quiet moments of vulnerability.” Unferth and Martin are both writers of the sort that pre-snark culture could unabashedly—and even post-snark culture might objectively—call “literary.”

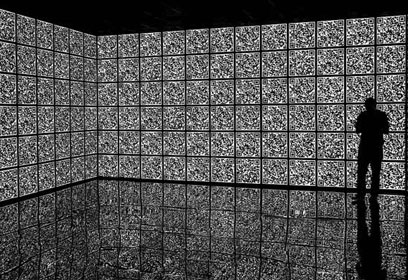

Google’s robot told us that what we took for literature was actually something closer to porn. We wondered, naturally, what its aesthetic standards were.

In Martin’s opening scene, the boy narrator’s mother brushes against him in the kitchen. In that perplexing state we call puberty, he finds himself unaccountably aroused. It is an experience rarely explained but often felt. The piece also discusses masturbation and flirtation among stepsiblings. “We read,” C.S. Lewis famously noted, “to know we are not alone.” (This seemed to be the spirit behind the sex education component of health classes in my high school.) Martin is daring in his willingness to mine little-tread experience. The essay wasn’t innovative, however, solely in its content. It was, as literature often is, innovative in its use of voice, the writer’s equivalent of brush strokes.

Any literate person could recognize that the essay was sending up the awkwardness of its subject by pursuing the word “early” literally, obsessively, almost absurdly, to a time before sexuality is usually discussed or imagined, though in ways that are plausible. But Google’s robo-cops are not, in fact, literate people—are not, in fact, people at all. Rather, Google polices AdSense users primarily with one of its trademarked algorithms.

How could we argue with math? AdSense, a product that grosses about $10 billion per year, is based on Google’s search algorithm—the programming, infallible to its creators, that has given order to the chaotic Internet. Was it Aeschylus who spoke of the “Great Algorithm of Justice”? Never mind that Guernica’s essays (brag-sheet warning) have been official selections in “Best American Essays,” or that we have published Nobel laureates and Caine Prize winners.

When Google’s robot told us, in so many words, that what we so naively took for literature was actually something closer to porn, we wondered, naturally, what AdSense’s aesthetic standards were. We also took Google’s interference as a kind of a betrayal. Given Google’s own incredible reading series, authors@google—which has included Guernica contributors and friends, such as Irina Reyn—our staff had always felt positively toward the company, whose very name became our verb for wondering. We thought we could relate to this brand, its story and culture. The sudden imperiousness was therefore all the more surprising and frustrating.

After receiving the warning, we half-heartedly reacquainted ourselves with the AdSense policies and bemoaned them at editorial meetings. The mechanics of re-editing such a funny and irreverent piece daunted us. In place of “My penis swells with joy” would Google prefer . . . ? It was too silly to name the synonyms, even to try the permutations. Nor did asterisks in place of the vowels satisfy us. Nowhere was there a list of banned words or pages in violation. Martin’s piece was merely listed as a sample violation. To disentangle this, we had no one to ask. As far as we could tell, we could do nothing but guess.

In July Google sent us another email. The subject line now read “ACCOUNT STATUS: ACTIVE,” but we also learned that Google had “disabled ad serving to your site.” We sifted through the confusion—active but disabled?—picturing a parent counting to ten above an unruly child. Somehow we gleaned that, in GoogleSpeak, the revocation was not yet irrevocable.

Annoyed by this censorship by attrition, we . . . googled for an answer and discovered a site on which we could appeal.

I filled out the appeal, hastily and indignantly, myself:

1. Have you or your site ever violated the AdSense program policies or Terms & Conditions? If so, how?

Google’s algorithm believes we have because it detected sexually explicit language. But with all due respect, the algorithm is mistaken. The claim is that Clancy Martin’s piece, ‘Early Sexual Experiences,’ which describes graphic depictions of sex from a comedic standpoint is there somehow to earn us salacious hits or to turn us into a porn site. A human being could take one look at our site and see how preposterous that is.

2. Any relevant information that you believe may explain the invalid click activity we detected:

Your algorithm I’m sure is very smart. But . . . it still isn’t human and cannot detect or judge context. We believe context here is everything. There is plenty of sexual language in the piece you’ve found; there is nothing pornographic about it or on our website. You should reinstate our AdSense immediately.

3. Any data in your site traffic logs or reports that indicate suspicious IP addresses, referrers, or requests:

Nope. Just comment spam which we delete.

Weeks and weeks later, we heard back from Google:

Hello,

Thank you for your appeal. We appreciate the additional information you’ve provided, as well as your continued interest in the AdSense program. However, after thoroughly re-reviewing your account data and taking your feedback into consideration, our specialists have confirmed that we’re unable to reinstate your AdSense account. . . .

Sincerely,

The Google AdSense Team

“The AdSense network is considered family-safe,” according to Google’s guidelines. Most likely, our violation came under the heading of “crude or indecent language, including adult stories.” Compared to other banned content such as “sexual aids, devices, and enhancers such as: vibrators, dildos, lubes, sex games, inflatable toys, penis and breast enlargements, and sex instructional videos” and “sexual tips or advice,” our violation seemed the most subjective and imprecise. How does Google define “crude,” “indecent,” or “adult”? What more can the company do but list a bunch of words it considers obscene, alone or in combination, and search for them with the algorithm?

Perhaps Google has good reasons to enforce a policy that protects it family-friendly business. One way or the other, the enforcement is inconsistent. Bigger sites, which make Google more money and subject far more eyeballs to the allegedly offensive material, brim with violations. Take the ban on “sexual tips or advice.” Let’s forget for the moment that this would place educational health or psychology sites under the broad rubric of unacceptable adult content.

I checked men’s sites, which typically use AdSense or Doubleclick, another Google advertising product, often both. I found both on the sites of Men’s Fitness and Men’s Health. Headlines on Men’s Fitness promised insights into such problems as “My Girlfriend Won’t Let Me Go Down on Her—Help?” or “10 Things She’s Secretly Thinking About Your Penis.” A piece titled “Sex on Wheels” offered tips on how to deal with readers’ woeful lack of in-car sex and teased with the question: “Now how about testing your upholstery’s stain protection?”

More outrageously, AdSense has been known to ban political activists in the midst of their most pitched battles against state repression. In January 2012 a number of graphic photographs got Sahara Reporters, a Nigerian government watchdog site, banned. One set shows Nigerian police officers manhandling a protestor, Ademola Aderinde. Another shows Aderinde’s pants pulled down from his desecrated corpse. Google dropped Sahara Reporters for violation of the ban on “violent content.” Just as the ban on “adult” material makes no room for literary-comedic exceptions, the prohibition of violent content allows no exception for context.

The gospel of the Internet and social media as enablers of the public good is seriously challenged by the case of Google and Sahara Reporters.

This came at the height of the worldwide Occupy movements. AdSense had been working well, nearly doubling the site’s revenue, until Google cut it off. “Immediately I had the feeling that perhaps Google was working with the Nigerian authorities,” Omoyele Sowore, the founder of the site, wrote me in an email, “because YouTube had done similar things to us.” YouTube was purchased by Google in 2006. Sahara Reporters had much to fear from the government. It was, according to Sowore, “deeply involved in processing tonnes of photos and texts from citizen reporters across Nigeria, as there was ongoing police crackdown on peaceful protesters,” Sowore told me. His site was “at the forefront of exposing this [when] I get an alert from Google AdSense that we had violated the rules and would be taken off AdSense.” The photo on the page Google cited was of Aderinde.

On a scale of raw cringe-worthiness—admittedly a hard thing to define—the banned Sahara Reporters photos sit somewhere between the very top—think of Khaled Saeed, the young Egyptian whose mortuary photo helped to spur the Egyptian revolution—and the next tier down, where you might find the less graphic photos from Abu Ghraib, which clearly showed dehumanization but were not as gory as the photo of Saeed. In other words, photos of the sort that led to the downfall of Hosni Mubarak or helped an ostensibly antiwar president get elected in the United States were automatically designated as suspect by Google’s clumsy, illogical, family-friendly rules. The gospel of the Internet and social media as enablers of the public good is seriously challenged by the case of Google AdSense and Sahara Reporters. At the very least, keeping the photos up and available could have helped to stanch the violence. Those photos also support the case for prosecuting civil and human rights crimes—prosecution that, presumably, makes all citizens, families included, safer.

“There’s nothing terribly complicated to Google’s algorithm,” Siva Vaidhyanathan, author of The Googlization of Everything, told me. Like the search function, AdSense software constantly organizes the Web by ranking pages. “The algorithm scores every page in terms of risk. Some it ranks as perfect and others it wants absolutely no association with its advertisers; they’re clearly obscene,” Vaidhyanathan explained. But there’s a problem. If Google let everyone know what exactly its algorithm looks for, people would be able to “game the system,” as Vaidhyanathan put it. So Google doesn’t publish its list of obscene words, which makes AdSense “a trap for any legitimate publication because you never know the rules,” according to Vaidhyanathan.

In an attempt to break the trap, Guernica got two Google AdSense representatives to talk with us by phone. Google justified its policy as “a simple business decision.” The call ended rather quickly with Google announcing that since “real humans” read the appeals, we might consider filing ours again, but without whatever snideness of tone we may have used the first time. After a second appeal—worded as benignly as my colleague Tana Wojczuk could manage—to Google’s “real human,” the ban remained in place. With the help of the Committee to Protect Journalists, however, Sahara Reporters wrangled Google into not only restoring their AdSense, but also helping them figure out how to make even more money.

It is true that in neither our case or Sahara Reporters’ did Google absolutely ban the speech in question. But the withholding of money from some sites, for political or aesthetic reasons, will inevitably have the effect of competing some of those sites off the Internet. Without AdSense Guernica lost whatamounted, some months, to about 10 percent of its budget. The competitive atmosphere means that sites with less funding are less sustainable and worse equipped to continue their work. Google’s robo-bureaucracy has been so widely detrimental that there has grown a virtual cottage industry of advice, online catharsis, and confession over AdSense rejection, and even a book on how to beat an AdSense ban.

Henry Miller’s Tropic of Cancer was once prohibited by the U.S. government. When the book’s publisher—Grove Press, then owned by Barney Rosset—printed it anyway, he could in theory have sent staff to local bookstores with boxes of the book in their cars, and so on, whether legally or not. Web-only magazines don’t have that option.

Today Google will sell you that Miller book, even give you a few pages for free. Its value as literature was secured by Grove and Rosset, who fought seemingly endless cases against government censors because, yes, Tropic of Cancer was considered family-unfriendly. Martin’s swelling appendages are tame by Miller’s standards. Miller offers penises being stroked and left on sidewalks. He has six-foot whale penises and a “Gandhi with a penis.” Since this tome is available on Google Books, may we assume that by Google’s own standards it is not porn? Perhaps the vaunted algorithm is just confused: on one occasion when I searched Google for the book, an ad for cancercenter.com appeared.

But what if Google had polled its advertisers and they agreed with the algorithm: they don’t want their ad next to Martin’s story? This is the problem. Google doesn’t poll. Instead it makes an all-or-nothing judgment for its advertisers, even though it would be simple to obtain their input. Publishers hosting AdSense are able to remove ads they dislike, so why not reverse that principle and allow advertisers to avoid publishers they don’t want to associate with? What if, before threatening its users, Google sent an automated email to advertisers asking if they found our comedic essay, the Occupy Nigeria photos, or any other at-risk material objectionable, salacious, or in bad faith? Wouldn’t that deal with Google’s quasi-censorship via automation, and wouldn’t it also better provide that hallowed value of the grand virtual marketplace: choice?

Or how about just removing AdSense from the one or two pages that the algorithm finds objectionable? Isn’t Google intelligent enough, fair enough, to allow for edge, innovation, and a difference of opinion on one page without smothering a whole enterprise’s revenue stream?

To do so would take not just an algorithm hunting for bad words, but a heart and mind looking at the worthiness, morality, and aesthetic power of the enterprise writ large. And for that Google may be ill equipped.

Google’s absolutist policy is marred because its implementation feels arbitrary. Just as a jury, given ample evidence, can distinguish between manslaughter, self-defense, a killing during war, or malicious murder, in daily life we consider the context of moral acts, a context that usually is revealed by something that can be surmised but not measured. I mean intent. Not only does Google have an unsophisticated process for gauging context or intent, but the company also seems to be run by people who just don’t care about it and can’t be bothered to even after you ask nicely.

Update (September 26, 2013): While this story was being filed Google approached Guernica to help the magazine, as with Sahara Reporters, make more money. Guernica declined.