In the jargon of academia, the study of what we can know, and how we can know it, is called “epistemology.” During the 1980s, philosopher Richard Rorty declared it dead and bid it good riddance. To Rorty and many other thinkers of that era, the idea that we even needed a theory of knowledge at all rested on outmoded, Cartesian assumptions that the mind was an innocent mirror of nature; he urged that we throw out the baby—“truth”—with the bathwater of seventeenth-century rationalism. What’s the Use of Truth?, he asked in the provocative title of his final book (published in 2007). His answer, like that of many of his contemporaries, was clear: not much.

We are living through an epistemological crisis.

How things have changed. Rorty wrote his major works before smartphones, social media, and Google. And even through the Internet’s early days, many believed that it could only enhance the democratization of information—if it had any impact on society at all. The ensuing decades have tempered that optimism, but they’ve also helped make the problem of knowledge more urgent, more grounded. When millions of voters believe, despite all evidence, that the election was stolen, that vaccines are dangerous, and that a cabal of child predators rule the world from a pizza parlor’s basement, it becomes clear that we cannot afford to ignore how knowledge is formed and distorted. We are living through an epistemological crisis.

Epistemology is thus not only poised to be “first philosophy” again. In a real sense, we must all become epistemologists now—specifically of a kind of epistemology that grapples with the challenges of the political world, a political epistemology.

Interest in how knowledge is acquired and distributed in social groups has long been a substantive field of inquiry in the social sciences. But with notable exceptions—such as W. E. B. Du Bois, John Dewey, Thomas Kuhn, and Michel Foucault—twentieth-century philosophers mostly focused on the individual: their central concern was how I know, not how we know. But that began to change near the end of the century, as feminist theorists such as Linda Alcoff and Black philosophers such as Charles Mills called attention to not only the social dimensions of knowledge but also its opposite, ignorance. In addition, and working largely independent of these traditions, analytic philosophers, led by Alvin Goldman, launched inquiries into questions of testimony (when should we trust what others tell us), group cognition, and disagreement between peers and experts.

The overall result has been a shift in philosophical attention toward questions of how groups of people decide they know things. This attention, not surprisingly, is now increasingly focused on how the digital and the political intersect to alter how we produce and consume information. This interest is on display in Cailin O’Connor and James Weatherall’s recent book The Misinformation Age: How False Beliefs Spread (2019), as well as in C. Thi Nguyen’s work on the distinction between echo chambers (where members actively distrust “outside” sources) and epistemic bubbles (where members just lack relevant information). These examples highlight how philosophy can contribute to our most urgent cultural questions about how we come to believe what we think we know.

A notable theme running through much of this work is that we can study the social foundations of knowledge without having to punt on concepts like objectivity and truth, even if we must reimagine how we access and realize them as values. And that is notable at a time when many see the value of truth in democracy as under threat.

To understand what this means, it can be helpful to think through some of the problems we need a political epistemology to help solve—what we might call the epistemic threats to democracy. Democracies are especially vulnerable to such threats because in needing the deliberative participation of their citizens, they must place a special value on truth. By this I don’t mean (as some conservatives seem to think whenever progressives talk about truth) that democracies should try to get everyone to believe the same things. That’s not even possible, let alone democratic. Rather, democracies must place special value on those institutions and practices that help us to reliably pursue the truth—to acquire knowledge as opposed to lies, fact rather than propaganda. The epistemic threats to democracy are threats to that value and those institutions.

Indeed, a striking feature of our current political landscape is that we disagree not just over values (which is healthy in a democracy), and not just over facts (which is inevitable), but over our very standards for determining what the facts are. Call this knowledge polarization, or polarization over who knows—which experts to trust, and what is rational and what isn’t.

Americans’ reactions to the COVID-19 pandemic serve as a painful illustration of the dangers of this kind of polarization. During the early days of the epidemic in the United States, and even as the rate of infection was spiking across the country, polls from Knight/Gallup suggested that a person’s political views, and their new sources, predicted how seriously they perceived the public health risk. Republicans were more likely to believe the lethality of the virus was overblown. As one Twitter wag put it, “Sorry liberals but we don’t trust Dr. Anthony Fauci.”

Research on “epistemic spillovers” indicates how deeply politicized knowledge polarization really is. An epistemic spillover occurs when political convictions influence how much we are willing to trust someone’s expertise at a task unrelated to politics. In one study that explored how this works in everyday life, participants were able to learn both about the political orientation of other participants, and their competency at an unrelated, non-political task (often extremely basic, such as categorizing shapes). Then participants were asked who they would consult to aid them in doing the task themselves. The result: people were more likely to trust those of the same political tribe even for something as banal as identifying shapes. And they continued to do so even when they were presented with evidence that their political cohorts were worse at the task and even when there were financial incentives to follow that evidence. Put another way, Democrats are more likely to trust Democratic doctors, Democratic plumbers, and Democratic accountants than they are Republican ones—even when they have evidence that this will lead to worse results.

If you think that Democrats are brainwashed slaves of the Liberal Media, you aren’t going to trust their supposed expertise when they tell you there is a pandemic.

This and similar studies suggest that ideological politics and knowledge polarization feed each other in a feedback loop of mistrust. If you think that Democrats are brainwashed slaves of the Liberal Media, you aren’t going to trust their supposed expertise when they tell you that there is a pandemic, or that the Earth is warming, or that the election was fair. Indeed, mistrust on both sides of the political spectrum encourages mutual skepticism—the very thing epistemologists have often been accused of spending too much time worrying about.

This skepticism can prevent people from following the evidence to life-saving conclusions—and thus refuse to wear masks or socially distance. And, in at least two ways, it can also threaten a society’s commitment to protecting and fairly distributing accurate information.

First, when people mistrust institutional expertise for political reasons—whether that’s about vaccines or climate change—they will not value the research guided by such expertise. And that in turn erodes the democratic value of truth-seeking—for example, by funding federal research institutions—which, for all of its failings, is meant to help us figure out what to believe and how to act, including in the voting booth.

Second, skeptical mistrust can also—bizarrely—cause people to dig in. The ancient Greek pyrrhonists thought that skepticism was healthy because it would make one more, well, skeptical—that is, less likely to believe stupid stuff. But the sad history of humanity suggests they were way too optimistic: knowledge polarization seems to make people more confident in their opinions rather than less.

Why does that happen? One possibility is that our psychological frailties—certain attitudes of mind—get baked into our ideologies. And perhaps no attitude is more toxic than intellectual arrogance, the psychosocial attitude that you have nothing to learn from anyone else because you already know it all. A version of this popular on the Internet is the Dunning–Kruger effect, which poses that people with limited knowledge are prone to overestimate their own competence—they don’t know what they don’t know. In her forthcoming The Mismeasure of the Self, philosopher Alessandra Tanesini argues such arrogance isn’t just misplaced overconfidence; it is a confusion of truth with ego.

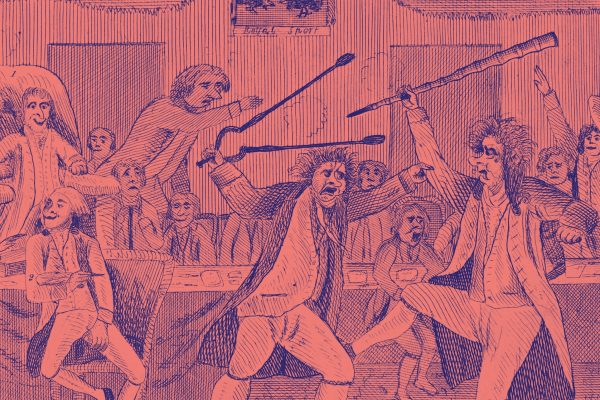

The idea that arrogance is bad news, both personally and psychologically, isn’t new. Sixteenth-century philosopher Michel de Montaigne was convinced it led to dogmatic extremism and that it could result in political violence. Dogmatic zeal, he famously said, did wonders for hatred but never pulled anyone toward goodness: “there is nothing more wretched nor arrogant than man.” But the real political problem is not arrogant individuals per se, but arrogant ideologies. These are ideologies built around a central conviction that “we” know (the secret truths, the real nature of reality) and “they” don’t. To those in the grip of such an ideology, countervailing evidence is perceived as an existential threat—to “who we are,” to the American Way, to the white race, and so on. The arrogant ideology, in other words, makes itself immune to revision by evidence; it encourages in its adherents what José Medina has called “active ignorance.”

Arrogance engenders entitlement, and entitlement in turn breeds resentment—forming the poisonous psychological soil for extremism. And, most importantly, it can be easily encouraged. As Tanesini stresses, arrogance is at root founded on insecurity—on the fear of real or imaginary threats, be they from Satan-worshiping child sex-traffickers or Jewish lasers from outer space.

This brings us to the most obvious epistemic threat to democracy, one that feeds and is fed by the others: conspiracy theories and what historian Timothy D. Snyder has called Big Lies. There is often debate about whether people saying and sharing such things “really” believe them, and to what degree endorsing them is a form of partisan identity expression. But this may be the wrong question entirely.

Sincerity of belief matters less than whether beliefs, sincere or not, inspire action.

Whatever the psychology (and it surely varies) of the individuals involved, the January 6 attack on the Capitol suggests that we should be less concerned with sincerity of belief and more with whether beliefs, sincere or not, inspire action. While you can be committed to act on behalf of an idea without believing it, from the standpoint of politics, it is that commitment that matters.

Put slightly differently, what we really need to understand is how big political lies turn into convictions. A conviction is not just something one “deeply believes” (I believe that two and two make four but that isn’t a conviction). A conviction is an identity-reflecting commitment. It embodies the kind of person you aspire to be, the kind of group you aspire to be a part of. Convictions inspire and they inflame. And they compose our ideologies, our image of political reality. And as philosophers Quassim Cassam and Jason Stanley have both argued, an essential insight into Big Lies is that they function as political propaganda, as ways of propagating a particular worldview. That’s their political harm: they motivate and rationalize extremist action.

But Big Lies also do something else: they defray the value of truth and the democratic value of its pursuit.

To understand how this works, imagine that during a football game, a player runs into the stands but declares, in the face of reality and instant replay, that he nonetheless scored a touchdown. If he persists, he’d normally be ignored, or even penalized. But if he—or his team—hold some power (perhaps he owns the field), then he may be able to compel the game to continue as if his lie were true. And if the game continues, then his lie will have succeeded—even if most people (even his own fans) don’t “really” believe he was in bounds. That’s because the lie functions not just to deceive, but to show that power matters more than truth. It is a lesson that won’t be lost on anyone should the game go on. He has shown, to both teams, that the rules no longer really matter, because the liar has made people treat the lie as true.

Digital literacy pays dividends when started at an early age.

That’s the epistemic threat to democracy from Big Lies and conspiracies. They actively undermine people’s willingness to adhere to a common set of “epistemic rules”—about what counts as evidence and what doesn’t. And that’s why responding to them matters: the more folks get away with them, the more gas is poured on the fires of knowledge polarization and toxic arrogance.

Those working in political epistemology—including those contributing to a scholarly new “handbook” on the topic—can help us come to grip with these threats. But they can also help us understand how to combat them.

An ongoing argument concerns whether fact-checking Big Lies and conspiracies ever helps. Some—citing what was called the “Backfire effect”—claim that it can actually make things worse (by causing those in the grips of the lies to dig in). Recent work suggests that effect is overblown, thankfully. But it is still worth clarifying what “helping” even means here.

To those in the grip of arrogant ideologies, convinced that only they know and everyone else is a moron, it is unclear, at best, that just lobbing more facts at them is going to help at all—if by “help” we mean “change their minds.” To this point, we must be clear: in such cases, what matters is not changing their minds but keeping them from power.

But that’s the short game. We must also be concerned with the long game. Fortunately, as the Finns have been showing, digital literacy pays dividends when started at an early age. We are just beginning to understand how knowledge is consumed, transmitted, and corrupted on the Internet. But one thing we do know is that the intense personalization of online information is feeding knowledge polarization. Almost everything we encounter online—from the news on our Facebook feed to the ads on our favorite news sites—is tailored to fit our preferences. And that means that the algorithms that make it so gloriously simple to find what we want to watch also make it extremely unlikely we will ever casually encounter anything except just those “facts” we are already prone to believe. Teaching this to young children—and getting them to track the difference between conspiracy and critical thinking—is a no-brainer.

Another thing we can do, to use Cassam’s term, is “out” lies and conspiracies for the political poison that they are. It is important to do so, not because it will convince the liars, but because doing so demonstrates our own convictions and values—including the value of truth in a democracy. That’s the thing about facts and evidence: they matter not just because they help us see the world more clearly, but because they serve an essential democratic function. The epistemic rules are part of what makes the democratic game what it is, a space where we try to solve problems not with a gun but with an exchange of reason.

These suggestions of course will only get the boulder so far up the hill. We can’t be content with playing by the same old epistemic rules we have always used. For one thing, technological changes in how we receive information obviously require changes in how we evaluate evidence. But for another, we need to be aware of how our institutions of knowledge and belief have been structured to reproduce the arrogant ideologies of racism and nationalism. And as feminist and Black philosophers, from Sandra Harding to Lewis Gordon, have been saying for decades, we need to realize that those same institutions also isolate and marginalize those who, ironically, are best placed to see their faults. So we need to respect epistemic rules, yes, but we also need to write new ones. To accept that task is to accept the political part of political epistemology.

We no longer have the option of putting epistemology aside. We must, instead, reinvent it.

We also can’t ignore the need to say more about the notion at the very center of any epistemological enterprise. It is easy, at least after the smoke clears, to see that political judgments are often false. From immigration to health care, we get it wrong more often than we get it right. But that raises the question of what it means to get it right in politics in the first place—of talking about the truth in regard to anything that involves people. The difficulty of this challenge is part of what made Rorty abandon the notion of truth as useless in politics. But our situation is not his. We no longer have the option of putting epistemology aside. We must, instead, reinvent it.