Abhijit Banerjee calls for an end to lazy thinking in the design of aid programs. What we need instead, he says, are randomized impact evaluationsof the sort promoted by his organization, MIT’s Abdul Latif Jameel Poverty Action Lab.

I agree that aid agencies should do more randomized impact evaluations. In fact, they should be implemented whenever possible. But this statement needs to be put into perspective, as the portion of development aid that can be subject to randomized impact evaluation is severely limited. Testing must not be promoted exclusively and at the expense of other valuable approaches. And while randomized impact evaluations can yield useful information, the search for technical rigor must not take precedence over practical lesson-learning.

When, then, can randomized impact evaluations be used? Banerjee compares aid programs to drugs; the analogy is a good one. Randomized approaches can be used to evaluate discrete, homogenous interventions, much like a pill in a drug trial. But most of the projects of large official agencies—which constitute the bulk of aid—do not resemble the conditions of medical testing.

Over the last 20 years a large share of aid has been designated for broad reforms: labor market reform, reductions of producer and consumer subsidies, privatization, interest rate increases, and the exchange rate reform noted by Banerjee. In the last 15 years increasing amounts of aid have funded the development of democratic institutions, anti-corruption bodies, policy think tanks, and the collation of voter roles. Donor agencies also supply experts to support these kinds of reforms and improve the quality of developing-country institutions. For example, activities to improve learning outcomes—such as the use of flip charts or textbooks—may be part of a larger project that includes computerizing the country’s education database, providing training to central ministry staff, and constructing a new teacher training college. Such projects cannot be subject to randomized evaluation.

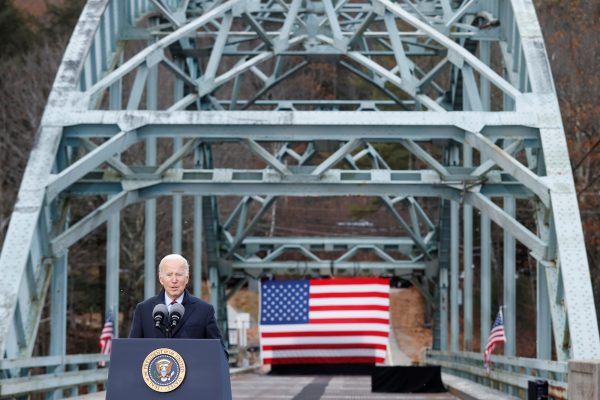

Unlike the smaller, NGO-supported programs cited by Banerjee, large-scale infrastructure projects supported by official agencies—such as rehabilitating a port or building a major bridge—are not amenable to randomization. Donors have supported systematic social-service provision across countries through the construction of health and education facilities and training for their staff based on mapping exercises and manpower planning: children need schools and teachers before they can go to school and learn. These are not sufficient conditions, but they are necessary. Like broad policy reforms and large-scale infrastructure projects, they cannot be subject to randomization.

And finally, donor agencies increasingly provide budget support—money the government can use as it wishes. In this case it is the government’s programs, not the donors’, which need to be evaluated. Donors must ask how their budget support affects the level and composition of expenditure. Again, randomized approaches are of no help.

But other kinds of evaluations are both possible and useful, and they are being put into practice. The World Bank’s Independent Evaluation Group carries out project assessments for one quarter of all its completed projects. In recent years, the group has reported on the issues of impact and effectiveness of trade and financial sector reform, debt relief, and support to poverty reduction strategies. It has also demonstrated the importance of investments in social infrastructure for improving social outcomes—for example, in reports on basic education in Ghana and health services in Bangladesh.

Are there other ways to evaluate projects? There is a well-established method of identifying which projects should get funded called cost-benefit analysis. This analysis lists all the costs and benefits from a project and assigns a value to them. This includes putting a price on things that the market may not value, or may not value appropriately, such as adverse environmental impacts. The method was developed over 30 years ago and was widely adopted by the World Bank and other agencies. It subsequently fell out of favor for the poor reason that it was assumed not applicable to increasingly important social investments (it is), and for the better reason that it couldn’t asses whether the institutions responsible for service delivery actually worked. When they didn’t, few if any benefits were realized.

Ex post evaluations, or process evaluations, therefore started to focus much more on process issues. Process evaluations look at how well project management is working and if implementation has been satisfactory. They can draw conclusions about sustainability based on the strength of the institution delivering the services and the adequacy of financing once aid to the project stops.

Donor agencies conduct national evaluations which assess aid partnership over a ten-year period. Such studies are more than the sum of their parts, since donors seek influence through demonstration effects, informal interactions with government, and more formal discussions about country and aid strategies. Stakeholder analysis is needed to conduct such studies, not randomization. Policy reforms may be more formally evaluated using modelling approaches. Process-oriented approaches have general applicability to programs, policies, and projects, though they are not designed to evaluate impact.

Cost-benefit analysis was introduced to do precisely what Banerjee calls for—deciding what should be financed—but not by examining all the possible uses of funds, as he suggests. Calculating the benefits requires an assumption about impact, and it is obviously best if these assumptions are based on evidence from impact evaluations. But such impact evaluations can only adopt a randomized approach in a small minority of cases.

Cost-benefit analysis may be applied more widely, though it must incorporate a more comprehensive view of institutional conditions. For instance, if we build a road, we need to know who will maintain it, whether they have the necessary skills and equipment, and where the money for maintenance will come from. In the case of education, we need to know what incentives teachers have to put improved methods into practice.

At the very least impact evaluations need to report cost-effectiveness, but better still they should offer a proper cost-benefit analysis. They must attach an economic value to things not priced by the market. To understand why aid has worked or not, the analysis should examine not only a specific impact but all levels of project implementation. An Independent Evaluation Group analysis of support to extension services in Kenya, for example, found little impact. The failure was readily explained by data that showed both a weak link between new research and extension advice and little change in the practice of extension workers. Similarly, a study of a nutrition program in Bangladesh found little impact. In this case the study identified the mis-targeting of potential beneficiaries and the failure of mothers to put into practice the nutritional knowledge they acquired through counselling. A randomized approach might have only shown that the project didn’t work, and would not have helped explain the causal factors.

In the end Banerjee gives the impression that aid is not being evaluated. This claim is simply incorrect. What he means is that there are too few randomized impact evaluations. There are good reasons for this, though there is scope for greater use of the approach. We can readily endorse Banerjee’s ends, but endorsing his means would put an end to many effective things aid donors do. Instead, the proper use of cost-benefit analysis would do more to help donors make good choices.

The views expressed here are the authors’ and do not necessarily reflect those of the World Bank or the Independent Evaluation Group.